- Solutions

- Products

- Resources

- Company

Investor Relations

Investor RelationsFinancial Information

- Careers

Video

Watch this video to discover Ceva-NeuPro Studio

A streamlined platform for building and deploying AI models on Ceva NPUs with top efficiency and precision.

Watch here Ceva-NeuPro Studio is a comprehensive software development environment designed to streamline the development and deployment of AI models on the Ceva-NeuPro NPUs. It offers a suite of tools optimized for the Ceva NPU architectures, providing network optimization, graph compilation, simulation, and emulation, ensuring that developers can train, import, optimize, and deploy AI models with highest efficiency and precision.

Related Markets

The Solution

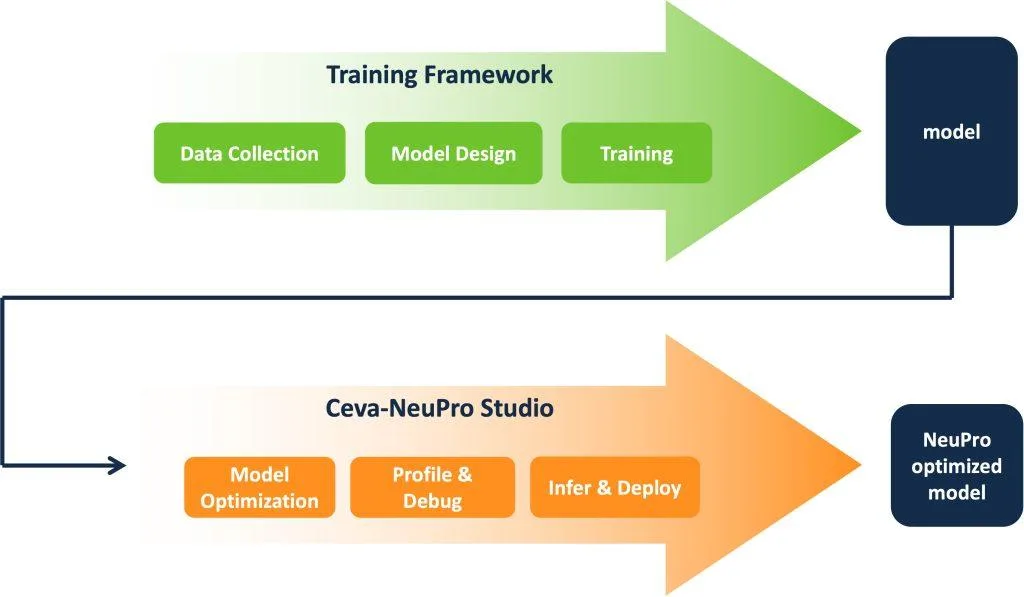

Ceva-NeuPro Studio provides the tools for a complete end-to-end flow transforming trained neural network models into executable code on Ceva-NeuPro NPUs. There are two operating modes for using the Ceva-NeuPro Studio. In the Ceva AI Model mode, users can select from a wide range of common neural-network models already trained and optimized by Ceva. This allows users to focus on their application rather than on model design and training.

This mode may also be used to provide benchmarks for optimizing the user’s own models. In the Bring-Your-Own-Model mode, users may import their own trained models from Caffe, PyTorch, ONNX, TensorFlow, or Keras frameworks. In either mode, models are imported and transformed using quantization and a comprehensive suite of optimizations. Then graph compilation is performed in TVM or microTVM, which are also available for run-time inference. The resulting code may be simulated and debugged in an Eclipse based IDE or may be executed using hardware emulation.

During development users may also import Ceva audio codecs or feature-extraction algorithms, as well as user-developed code. The resulting code may be partitioned across the multiple processing elements of the NPUs or user-defined accelerators and profiled in the Arch Planner tool to explore optimum use of computing and memory resources.

Benefits

Ceva-NeuPro Studio provides a bridge between cloud or PC-trained AI models and execution on energy-efficient, cost-conscious edge-AI devices, reducing the time between model training and optimized, thoroughly tested edge deployment. Taking in trained network models, the NeuPro- Studio environment optimizes and compiles the models, producing C/C++ code for Ceva-NeuPro NPUs in a visual Eclipse IDE.

There developers can compile the C/C++, simulate execution, profile performance, apply a full suite of debug tools, and explore efficient mappings of models onto the available NPU mechanisms. Along the way, users may include Ceva audio- or image-processing routines and user-written code. Throughout, industry-standard tools are used, and NeuPro Studio maintains a common user interface for all target Ceva-NeuPro cores.

Key Features

- Import from major training frameworks including Caffe, Keras, PyTorch, ONNX, TensorFlow, or LiteRT

- Support for pre-trained Ceva AI models and Bring Your Own Model (BYOM) approaches

- Powerful quantization and compression exploit Ceva-NeuPro NPU features

- Graph compiler produces optimized code to implement networks

- Software or hardware simulation and debug in Eclipse IDE with visual user interface

- Performance profiling using Arch Planner for system and memory partitioning

- Ability to include Ceva libraries, audio- and image-processing functions, hardware-ready Ceva Model Zoo models, and user code

- Target NPUs include Ceva-NeuPro-Nano, Ceva-NeuPro-M, and user-defined accelerators