- Solutions

- Products

- Resources

- Company

Investor Relations

Investor RelationsFinancial Information

- Careers

NPU IP for Embedded ML

Ceva-NeuPro-Nano - An ultra-efficient, self-contained NPU solution designed to execute Embedded ML workloads across low-power, compact AIoT devices.

Download Product Note

Ceva-NeuPro-Nano: Ultra-Efficient, Self-Sufficient Edge NPU for Embedded ML

-

- This Edge NPU, which is the smallest of Ceva’s NeuPro NPU product family, delivers the optimal balance of ultra-low power and high performance in a small area to efficiently execute Embedded ML workloads across AIoT product categories, including Hearables, Wearables, Home Audio, Smart Home, Smart Factory, and more. Ranging from 10 GOPS up to 200 GOPS per core, Ceva-NeuPro-Nano is designed to enable always-on audio, voice, vision, and sensing use cases in battery-operated devices across a wide array of end markets. Ceva-NeuPro-Nano makes the possibilities enabled by Embedded ML into realities for low cost, energy efficient AIoT devices.

Key Features

- Fully programmable to efficiently execute Neural Networks, feature extraction, signal processing, audio and control code

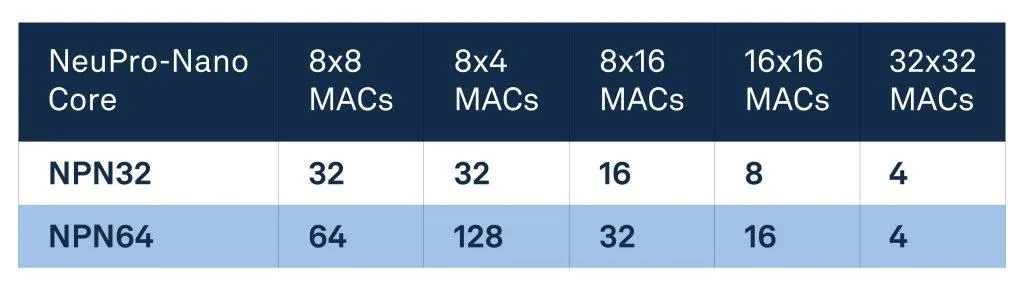

- Scalable performance to meet a wide range of use cases, with MAC configurations up to 64 int8 (native 128 of 4×8) MACs per cycle

- Two NPU configurations to address a wide variety of use cases are available:

- Ceva-NPN32 with 32 4×8, 32 8×8, 16 16×8, 8 16×16, 4 32×32 MAC operations per cycle

- Ceva-NPN64 with 128 4×8, 64 8×8, 32 16×8, 16 16×16, 4 32×32 MAC operations per cycle and 2x performance acceleration using 50% weight sparsity (Sparsity Acceleration)

- Future proof architecture that supports the most advanced ML data types and operators, including 4-bit to 32-bit integer support and native transformer computation

- Ultimate ML performance for all use cases, with Sparsity Acceleration, acceleration of non-linear activation types, and fast quantization – up to 5 times acceleration of internal re-quantizing tasks

- Powerful microcontroller and DSP capabilities with a Coremark/MHz score of 6.0

- Ultra-low memory requirements achieved with Ceva-NetSqueeze™, yielding up to 80% memory footprint reduction through direct processing of compressed model weights without the need for an intermediate decompression stage. NetSqueeze solves a key bottleneck inhibiting the broad adoption of AIoT processors today

- Ultra-low energy achieved through innovative energy optimizations, including dynamic voltage and frequency scaling support tunable for the use-case, and dramatic energy and bandwidth reduction by distilling computations using weight-sparsity acceleration

- Complete, simple to use Ceva-NeuPro-Studio AI SDK, optimized to work seamlessly with leading, open-source AI inference frameworks, such as LiteRT for Microcontrollers and µTVM

- Model Zoo of pre-trained and optimized machine learning models covering Embedded ML audio, voice, vision and sensing use cases

- Comprehensive portfolio of optimized runtime libraries and off-the-shelf application-specific software

Edge AI Sensing eBook or Webinar On-Demand: What it Really Takes to Build a Future-Proof AI Architecture

The Solution

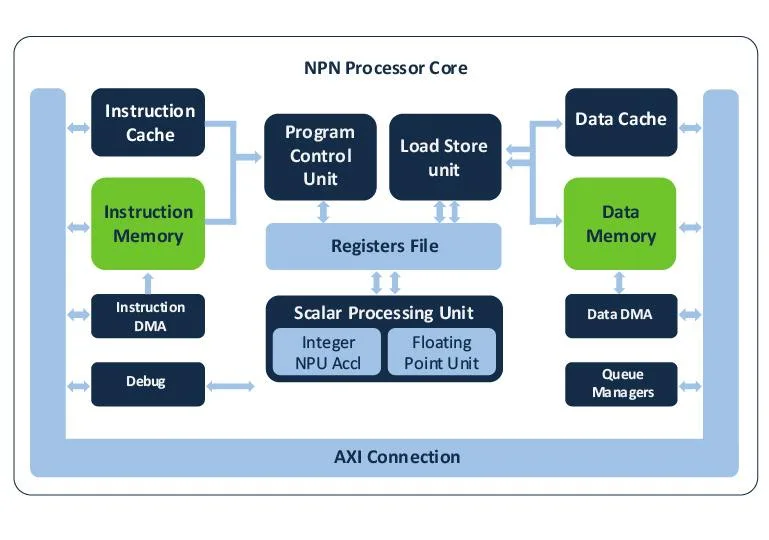

Ceva-NeuPro-Nano is a fully stand-alone neural processing unit (NPU), not an AI accelerator, and does not require a host CPU or DSP. The IP core includes all processing elements of a self-contained NPU, including code execution and memory management.

Key architectural capabilities:

- Fully programmable architecture for executing neural networks, feature extraction, control code, and DSP code

- Supports advanced ML data types and operators including native transformer computation, sparsity acceleration, and fast quantization

- Efficiently executes a wide range of neural networks with high performance

Two cores are available:

- Ceva-NPN32

- Ceva-NPN64 (includes hardware sparsity acceleration for 2× effective performance)

Additional advantages:

- Hardware-based weight decompression reduces memory footprint by up to 80%

- Full support through Ceva-NeuPro Studio, enabling importing, compiling, and debugging models from frameworks such as LiteRT and µTVM

Benefits

The Ceva-NeuPro-Nano NPU family is specially designed to bring the power of AI to the Internet of Things (IoT), through efficient deployment of Embedded ML models on low-power, resource-constrained devices. Ceva-NeuPro-Nano NPUs’ optimized, self-sufficient architecture enables them to deliver superior power efficiency, with a smaller silicon footprint, and optimal performance for Embedded ML workloads, compared to the existing processor solutions, which utilize a combination of CPU or DSP with a separate AI accelerator.

The Ceva-NeuPro Studio, which leverages open source AI framework and provides an easy-to-use software development environment, extensive pre-optimized models in the Ceva Model Zoo, and a wide range of runtime libraries, speeds product development for chip designers, OEM’s and software developers.

Related Markets

Technology Partner

The EDGE AI FOUNDATION (formerly tinyML Foundation) is a global non-profit community of innovation, collaboration, advocacy and education for efficient, affordable and scalable Edge AI technologies.